Fast Fact Check: Does Hep B Vaccination Cause Autism?

Obviously not

This was a timed post. The way these work is that if it takes me more than an hour-and-a-half to complete the post, an applet that I made deletes everything I’ve written so far and I abandon the post. You can find my previous timed post here.

The algorithm over on Twitter updates too quickly. If you like a post, you’ll get a lot of exactly that style of post on your feed, even if you don’t care for it. One of the ways that’s showing up for me recently is in a surge in posts kvetching about the latest anti-vaccination nonsense. An example from the Joe Rogan Show stuck out to me: in it, the the interviewee makes his case that early hepatitis B vaccination does, in fact, cause autism, and he makes it on the basis of just one study: Gallagher and Goodman (2010).

After the very obvious isolated demands for rigor—around 1:16—the guest says:

There is one study out there regarding Hep B vaccines and autism. It’s from Gallagher and Goodman out of University of Stony Brook, it’s in the peer-reviewed literature, and it showed that kids that got Hep B vaccines versus those that didn’t in the first six months of life had three-times the rate of autism—statistically significant. Gallagher/Goodman, University of Stony Brook, it’s on PubMed. That is the only study of Hep B vaccine and autism that you will find in the peer-reviewed literature. If you do it based on science, on the published literature, that’s the only one out there.

The study he mentions is, indeed, the only published study on the highly-specific topic of hepatitis B vaccination in the first six months of life. It is an atrocious study—as all anti-vaccine studies have proven to be—and we are lucky that its data is public.

Bad Studies With Public Data Fall Apart Readily

One of the virtues of economists is that for papers published in high-profile venues, they demand a replication package—a collection of all the code and, usually, the data required to replicate a set of findings—to ensure researchers ran the tests and got the results they claimed to. Unfortunately, a replication package is not always available; in those cases, fraudulent analysis, fraudulent data, and fraudulent presentation can linger, sometimes for many years and with significant effects on real-world policies and in some instances, they can even have effects on court cases.

Gallagher and Goodman’s (G&G) study uses the National Health Interview Survey (NHIS). Given that the NHIS data is public, we’ll be able to be virtuous just like the economists: we’ll be able to check if G&G’s results hold up!

Reproducing Results

The first thing to do is to mimic their exact model specification. G&G presented results from two models but ran several more. The ones they presented results from were univariate and multivariate logistic regressions using hepatitis B vaccination to predict parent-reported autism diagnoses. Can we reproduce them? For the most part, yes!

To reproduce G&G’s results, we have to subset the NHIS down to (1) just boys, (2) aged 3-17, (3) who have shot records, (4) and were born before 1999, (5) and had files containing autism and neonatal status, (6) and non-missing covariates, for the multivariate model. The outcome of interest is parent-reported autism diagnosis and having that on file was also required to enter the sample. This pares down the sample from 86,996 to 44,841 (boys-only), to 33,710 (ages 3-17), to 7,936 (shot records), to 7,737 (born before 1999), to 7,454 (autism and neonatal status), and finally, to 7,055 (no missing covariates).1

The univariate and multivariate results mostly hold up, even though I did not have their original SAS code available to handle the data exactly how they did. The differences in data parsing likely explain why when I used their specified sample, I found two more autism cases than they did. Who knows why that happened? But at least we got basically the same statistics, confirming that I am ‘good to go’ for deeper analysis. Let’s start said deeper analysis by talking about multiple comparisons.

Multiplicity

As you perform more and more statistical tests, the odds of getting a statistically significant result drift higher and higher. This means that a p-value of 0.05 is not that surprising once you’ve run a test ten times; at that point, it’s more like a p-value of 1-(1-0.05)^10 ≈ 0.40, assuming independent tests.2 To ensure a reported p-value maintains its nominal interpretation (i.e., that a p-value of, say, 0.05 actually means ‘a 5% chance of a result at least as extreme under the null’), researchers have to use multiple comparisons corrections, like the Bonferroni correction,3 to either adjust the thresholds for significance or adjust the p-values themselves to control the error rate.

However, because G&G conducted an unknown number of tests, we cannot know the size of the ‘family’ to adjust for. We know that G&G ran a lot of tests, so the adjustment we have to do must be large. At a minimum, they tested the primary exposure coefficient across the univariate and multivariate models for the boys and they reported those results. Among the ones they state that they checked the results without reporting for are the girls’ versions of the boys’ models they showed, the boys model with no birth year restriction, the boys model with and without the mothers’ high school graduation or higher as a covariate, they tested the relationship between hepatitis B vaccination and a non-autism composite, and they tested for vaccine-autism relationships for the varicella and MMR vaccines. Counting just this stuff and the associated tests in their models and you get to 35 individual parameter tests at minimum, not even counting whether they also ran univariate models for girls without birth year restriction, whether they ran a sex-combined model before deciding to stratify, and any exploratory looks based on the ethnic subgroups whose differences from the main sample they highlighted in their Table 2B.

G&G obviously ran a huge number of tests, but even adjusting for just the four univariate tests eliminates their main results. With just the four univariate tests in the family, the hepatitis B result is not robust to Bonferroni correction (the most conservative; a passing p-value becomes 0.0125), to Holm-Bonferroni (less conservative), or even to Benjamini-Hochberg correction (even less conservative). The only variable that passes is that two-parent households come with significantly less autism, and that’s not their focal hypothesis and also not biologically sensible at all.

More pointedly, using the p-values from our reproduction of G&G’s results, being non-Hispanic White as opposed to anything else and coming from two-parent household both retain their significance, but the hepatitis-autism association is never significant in the first place, let alone after any corrections.

Even going multivariate and using the same extremely favorably limited corrections, hepatitis B does not survive Bonferroni or Holm-Bonferroni correction, and it squeaks by Benjamini-Hochberg (0.031 vs. a threshold of 0.0375) in their specification, but not in our reproduction (in which it’s nonsignificant to start, despite our finding two additional cases). With the closer-to-correct family size of at least 35, nothing they found is significant and everything is far from it.

Conclusion I: G&G’s result is not robust to attempts to reproduce their exact sample and specification or to multiple comparisons correction. Their results are marginal and in the range of 0.01 to 0.05, making them especially likely to have been p-hacked.

Robustness to Their Sampling Choices

Let’s run through the models G&G did, but didn’t report on. This means going beyond reproducing their results to adding the data from 2003—which they had access to but decided to omit for some unknown reason—, removing the birth year restriction so we use all birth years and not just those born before 1999—which they justified via thimerosal exposure—, and then using the results for both sexes.

Their results are not robust and are borderline at best in each year before adding sex. The only reason the authors supply for stratifying by sex in the first place is that boys have an autism rate that’s more than four-times the female rate. That’s not even a justification, really, it’s just a background fact and not a preregistered analytical decision, so it makes no sense to have done it in the first place.

In fact, the authors said the finding that for girls there was a marginally significant protective effect was “paradoxical”. How? There’s no paradox here at all, they just used that to dismiss having to report the results for the girls, almost-certainly because they contradicted their anti-vaccine thesis. But though the girls’ results are treated as anomaly, they are just as real and legitimate as the boys’ results and the authors offered quite literally no reason whatsoever for dropping them, and there is no good reason to think they should be dropped. If there is a biological effect of vaccination on autism risk, we have zero reason to think it’s sex-specific.

Conclusion II: G&G’s result is not robust to their sampling choices. The biggest issue is arbitrarily dropping girls, which provided them with their marginally-significant headline results. Correcting this renders their results nonsignificant.

Robustness to Their Sampling Choices

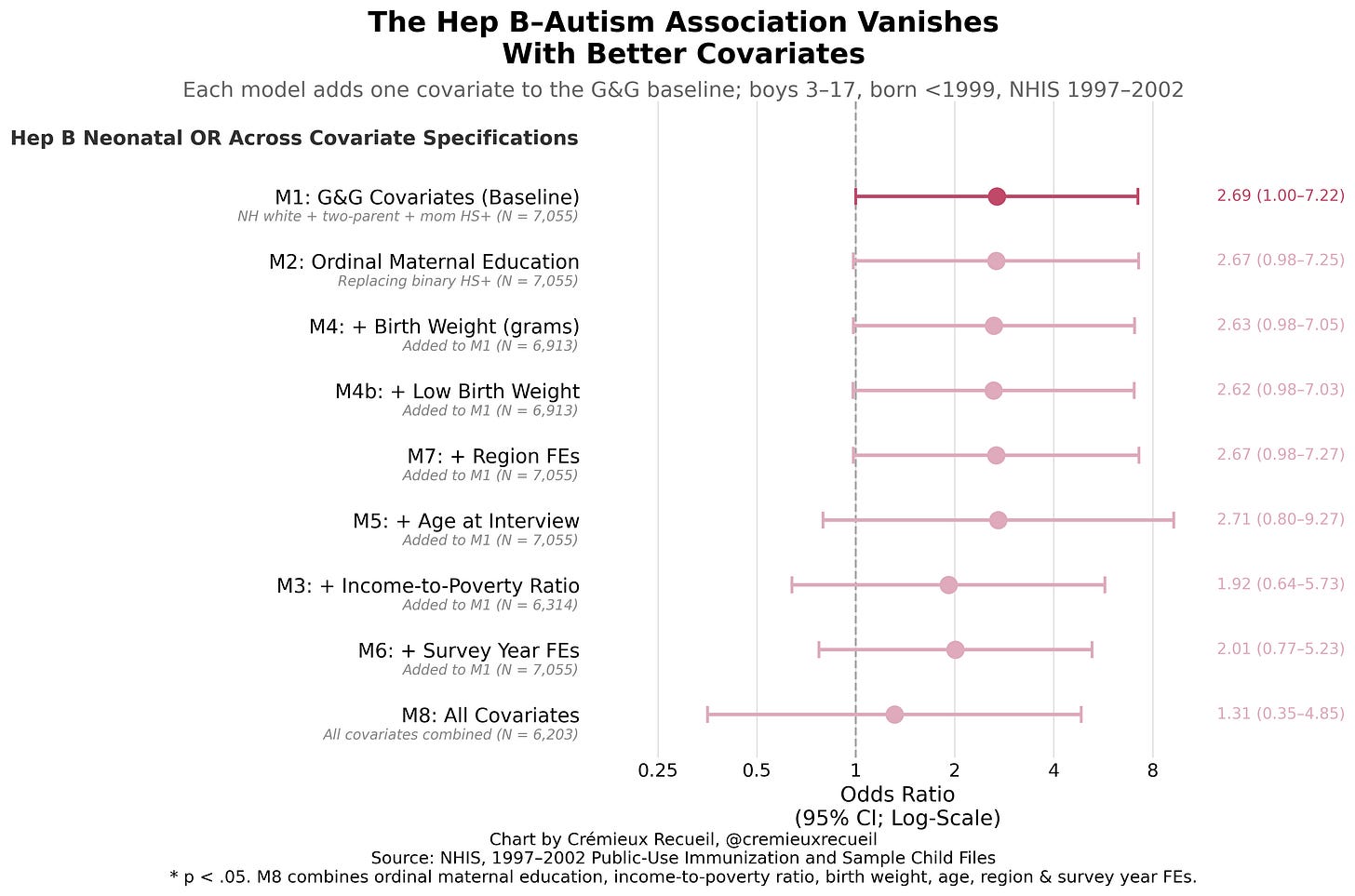

G&G used three controls in their analyses: non-Hispanic White race vs. the rest, two-parent households vs. the rest, and maternal education being high school or higher vs. the rest. They justified these on the basis of prior literature.

For the race control, they said that “black race has been shown to be associated with increased risk for autism” but also that another recent study had found “hat nonwhite children showed decreased risk for autism diagnosis” and yet another had found “Black race [is] associated with later autism diagnosis.” They found that being non-White was associated with roughly 65% lower odds of being diagnosed with autism.

For the two-parent household control, they said that “Absence of one parent was shown to be a risk factor for delay in vaccination” and “decreased risk for autism diagnosis.” They found that two-parent households had about 70% lower odds of autism diagnoses, so evidently the latter confounder wins! (For clarity, I don’t believe this is explanatory.)

For the education control, they said that “Higher maternal education level has been associated with greater likelihood of autism diagnosis.” They found that the higher-educated mothers were about 2.3-times as likely to register a child having a diagnosis.

G&G had literature justifications, but the set of justifications is incredibly sparse, especially given that several of their citations mentioned other possible controls that they had access to! For example, they’re missing birth weight, gestational age, maternal age, parity, healthcare utilization, insurance status, urbanicity, etc. Moreover, some of their cited risk factors now go the opposite way in more modern data: nowadays, Blacks get higher rates of diagnosis, the low-educated do too, and single mothers get their kids diagnosed more often. This is likely because people now see autism diagnoses as a way to obtain access to services, and those groups use said services more often.

G&G even noted that earlier authors had discussed selection bias that they could have addressed, and they didn’t! Quote: “van Damme et al. (2000) suggested that selection bias might play a role because parents of children diagnosed with a medical condition would be more likely to seek medical advice and preventive care for their child.” When we control for healthcare utilization, however, the result is never robust:

When we add in other theoretically-justified controls, without or in addition to our healthcare utilization controls, the result is once again, not robust. Notably, even just switching to a continuous measure of maternal education instead of an inappropriately discretized one (this lowers statistical power) is sufficient to take the result fully nonsignificant.4

Combine these important—as acknowledged by G&G and their citations!—controls and you get a very clearly null result. Remove the inappropriate sex stratification too, and this is just such an obvious nothingburger of a result:

Conclusion III: G&G’s result is not robust to their covariate choices. Moreover, they excluded several covariates they or their citations mentioned as important, while handicapping one of their covariates they did use by inappropriately discretizing it. With any of these, their result would have gone from slightly below the significance threshold to slightly above it. They quite evidently p-hacked their way to their result.

Now They’re Just Being Unkind

At this point in the paper, G&G seem to insult the reader’s intelligence. Though they’re concerned with neonatal males, they start talking about injections that are typically not given to neonates. They write:

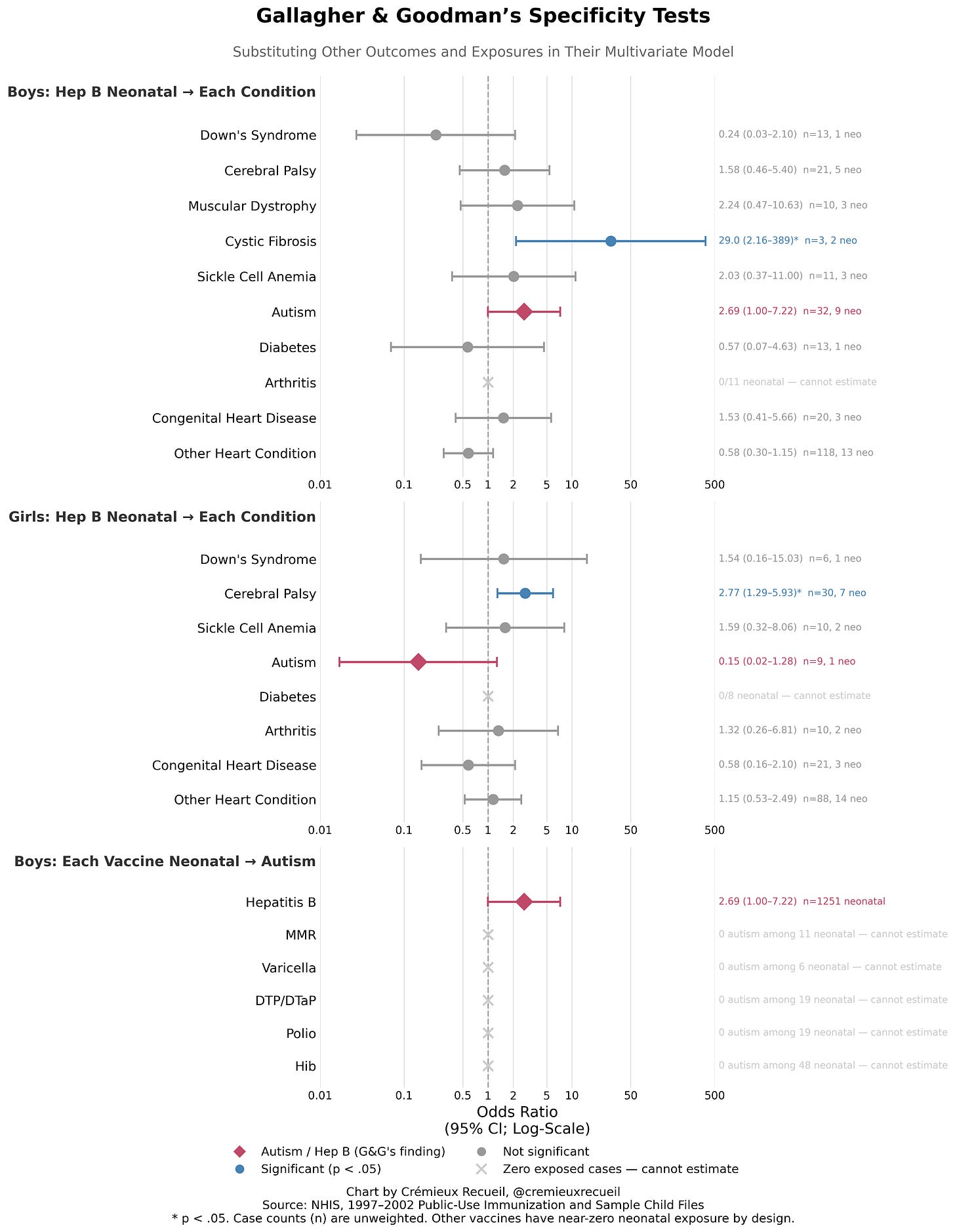

Specificity of the exposure was also tested by separately substituting the varicella and measles–mumps–rubella vaccinations for the neonatal hepatitis B vaccination in the model for autism; again, significant associations were absent.

Varicella? Given at 12-15 months. MMR? 12-15 months as well. Hib? Polio? Two months. DTP/DTaP? 2, 4, and 6 months.

Though they portray this test as evidence that the effect is specific to the hepatitis B vaccine, what they are in fact telling you is that they don’t expect you to check their results, because this obviously cannot be significant. The data shows only 11 neonatal male MMR injections, 6 for varicella, 19 for DTP/DTaP and another 19 for polio, and just 48 for Hib. These are more likely to be date entry errors than neonatal vaccination!

There were zero autism cases among this group, for the simple reason that both vaccination and autism are rare. As a result, we must be underpowered, and we must be getting misled by G&G. Or they’re just that daft. Jury’s out.

Even being generous to G&G and assuming that by “substituting”, they are referring to ever receiving the varicella or MMR vaccines at later ages, this provides a completely different exposure window, so it’s not a valid specificity test! Either they checked an empty test or an invalid comparison, but regardless, they duped reviewers. A real specificity test was unavailable to them, because to do it would require another vaccine that’s also routinely given at birth with substantial uptake, and hepatitis B is essentially the only one in the U.S. schedule, which makes a test impossible.

Conclusion IV: G&G’s claimed vaccine specificity tests were impossible to run with their data. And yet, they claimed to run them. They have to be dishonest, daft, or both.

They Don’t Expect You To Check, But They’re Lying

To show that the disease effect is specific to autism, G&G claimed to have run a bunch of other analyses of different conditions.

First, to test for disease specificity of the outcome affected by the exposure, parental report of any one or more of a group of outcomes with no known relation to autism, autoimmunity, or vaccines— i.e., Down’s syndrome, cystic fibrosis, cerebral palsy, congenital or other heart problems—was substituted for autism diagnosis in the multivariate logistic regression model; null effects were found.

The problem here is one that I never would have expected a non-methodologist to catch on even a careful read. It’s either nefarious or stupid:

G&G created a composite outcome that was based on various rare problems to check against for hepatitis B vaccine effects, but said composite is full of empty outcomes because of age-at-diagnosis issues and rarity leading to tiny case counts, so the result is an artificial, constructed null. It being null (OR = 0.88, p = 0.65) isn’t even good for their case, since the result is not significantly different from their headline result (p = 0.054)!

Unless G&G are just really bad at being scientific, what they did was malicious. And it’s not even the correct way to test for specificity by disease! You do not throw together a bunch of random diseases, because there is no biological sense to that. What pathway is expected to elicit effects from vaccination to… a composite of a bunch of random, disconnected diseases? It’s theoretical nonsense.

So, splitting it up, what do we see? Well, we do not see disease specificity. Take a look:

Instead of seeing a lack of association as G&G wrongly claimed, we see associations with some other diseases, and these associations are actually more robust than the male-only autism association. Even worse, the associations are impossible! Cystic fibrosis is fully genetic, caused by mutations in the CFTR gene. It cannot have anything to do with vaccination! For girls, we see a significant—and still, stronger than the male autism association—effect on cerebral palsy, but cerebral palsy is caused by prenatal or neonatal hypoxia, infections, trauma, prematurity, etc.

The idea of these things being caused by vaccination is preposterous. They are far more likely to reflect a bias—like one that would be obtained through increased healthcare utilization as a confounder—or an error, and they are obviously virtually meaningless, since they’re based on so few cases.

Conclusion V: G&G’s claimed disease specificity was misleading in the extreme, and actually testing their claims, we see that the supposed ‘effect’ of hepatitis B vaccination is, in fact, not specific to autism. This analysis was so incompetently done that it had to be an indictment of G&G’s abilities or an indictment of their character.

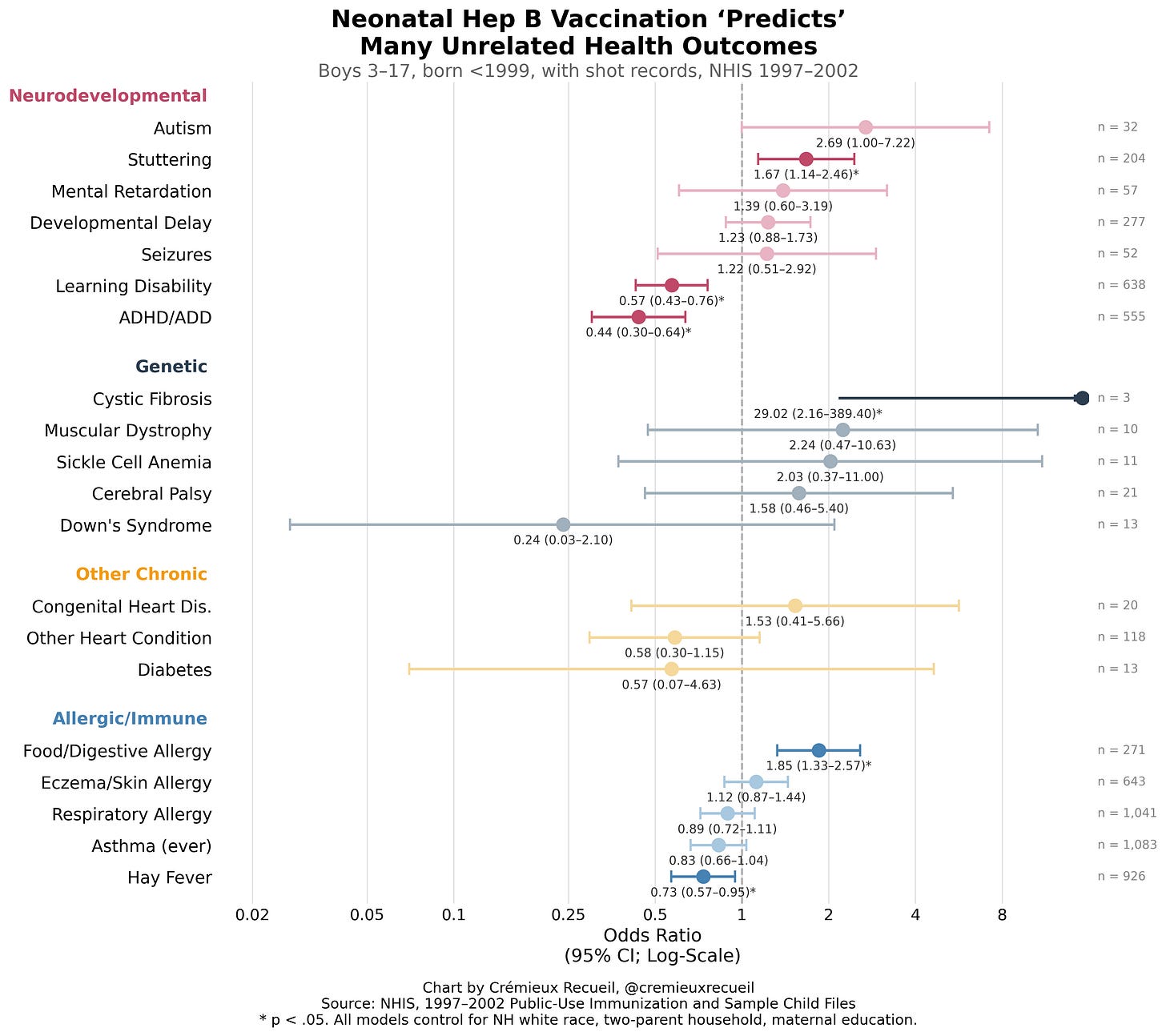

Let’s Be Serious About Falsification

We can and should go beyond the sorts of outcomes that G&G mentioned to mislead readers. If we do that, we’ll see that the supposed effects of hepatitis B are much more ambiguously bad than they let on.

Consider, for example, that hepatitis B vaccination is related to significantly and substantially and very robustly lower rates of learning disability and ADHD/ADD. It’s also somehow related to very high rates of cystic fibrosis, and—amazingly—to both higher rates of food and digestive allergy and lower rates of hay fever.

None of these results make much sense. Obviously the hepatitis B vaccine doesn’t raise kids’ IQs or help them sit down in their chairs in class. It also doesn’t cause genetic conditions that it’s as robustly associated with as it is with autism, such as muscular dystrophy or sickle cell anemia, and it doesn’t protect against Down’s syndrome, which is detectable before birth. And the idea of it both causing and reducing rates of allergy suggests a degree of biological specificity that nothing has ever been shown to achieve. And if we add the girls in, we see this:5

Adding in girls—because there was never any reason to exclude them in the first place—we see that the autism finding becomes nonsignificant, while the others that were significant, remain significant, and a few more become significant, but they’re mostly in the direction of vaccination being good (lower rates of asthma and respiratory allergy) or implausible in its effects (cerebral palsy). If G&G were more serious, they’d have written about how hepatitis B vaccination makes kids smarter! Instead, they wrote about one of their less robust findings. Pity.6

Conclusion V: Using G&G’s own methodology paints a murkier picture of hepatitis B vaccination effects. Their claimed link to autism is much more tenuous than the highly robust negative link to learning disability and ADHD. Clearly both things reflect confounding.

Obvious Tests to Run

G&G’s specification isn’t even the most obvious specification to run. They had no theoretical justification for running the tests they did, so why didn’t they run other ones? Presumably, if asked, they could fall back on saying that they were doing some sort of test of the childhood vaccination. However, that would leave them in the awkward position of having done a very poor quality test!

If other plausibly tests support their results, surely their case is helped. If they show something else, then they have explaining to do. So, I ran some dose-response and timing tests, and here’s what I got: bupkes.

Effect Exaggeration

All of these results are based on extremely small samples of kids with autism, like 9 cases here, 13 cases there. The cystic fibrosis result is based on 3 or 5 cases depending on if you just use boys or the whole group. These results are incredibly tenuous, and displacing a single boy from the group with both hepatitis B vaccination and autism to the other group would result in a nonsignificant effect. When your result depends on one kid in a cohort of thousands, consider disbelief, consider recognizing that you have very low statistical power.

One of the funny consequences of low statistical power and p-hacking is that published effects are huge. This is because the effects have to be in order to be significant given how imprecisely they’re estimated. We can directly assess the impact of this effect size exaggeration by projecting how much statistical power we had.

This study obviously has very little statistical power. With 32 autism cases and 9 of them being exposed, this is a very tiny effective sample for a rare outcome. The exposure applies to 18% of the sample, so the comparison is very asymmetric. G&G also searched across subgroups (boys, born <1999 only, neonatal timing only) to land on the one specification that hit their magical p < 0.05 alpha threshold. At 6% power, the true OR consistent with this design is about 1.23, and the observed OR is exaggerated 2.2-times over. At 8%, the true OR is 1.32, or 2-times exaggeration.

If the true OR was 1.05, they had 5% power to find it (exaggeration: 24x). If it was 1.20, they’d have had 7% power (7x). If it was a 50% increase in autism risk, they’d have had 13% power (3x). Even a doubling in autism risk is just 28% power (2x). Obviously this was an underpowered exercise and the p-hacking evinced above means that whatever we wound up with is a severely incorrect and unrealistic effect size.

Is Thimerosal Even Associated With Autism?

The stated motivation for testing the association between the hepatitis B vaccine given in a specific set of years and autism is that the vaccines once contained thimerosal. Of course, they no longer do, but autism has kept on rising with no detectable changes related to the phaseout of this ingredient. This makes sense, because heavy metals do not play a causal role in autism, and the amount of mercury in vaccine preparations was always a vanishingly small amount that the body reliably purges right away. So, is thimerosal vaccination associated with autism anywhere? No.

Wrap-Up

Gallagher and Goodman’s paper is currently under investigation by the venue that published it. That’s with good reason, because the paper was obviously p-hacked, it’s filled with what is at best evidence of incompetence and at worst evidence of fraud, and it’s caused harm by misleading people about vaccines that allow us to keep down the prevalence of a horrible, preventable disease. Let’s contribute to that investigation with some points:

G&G’s result is fragile. If one kid swaps groups, the result becomes nonsignificant

G&G’s analysis isn’t causally informative in the first place

G&G’s specification is cherrypicked

G&G’s result does not survive multiple comparisons/multiplicity correction

G&G claimed to run tests they either did not, or which they misreported

G&G ran tests that made no sense

G&G’s study was obviously underpowered, leading to an inflated effect size

G&G’s own methodology shows that hepatitis B vaccination is actually beneficial

G&G’s theory of their paper has been disproven time and again

All of this should’ve been caught in peer review but wasn’t. What are we doing here?

The whole anti-vaccine movement was borne out of fraud. The Wakefield Lancet Controversy was a scam to demonize one type of vaccine to sell more of another, and even though the fraud was obvious and the results were never plausible, the movement has not died. In fact, the movement is stronger than ever, and it’s presently ruining the health of thousands of American children who it’s foisting measles and other preventable diseases on. Ironically, anti-vaxxers often talk about other people doing fraud in support of vaccines; thankfully, the childhood vaccine schedule has never relied on fraud and there is no way to make the case it has with any evidence.