Wearables Mostly Don't Work

The latest fad in nutrition and exercise just isn't that helpful for most people

This was a timed post. The way these work is that if it takes me more than an hour to complete the post, an applet that I made deletes everything I’ve written so far and I abandon the post. You can find my previous timed post here.

Wearables are all the rage. From FitBits to WHOOP bracelets to Oura rings to smart glasses, continuous glucose monitors (CGMs), metabolism-tracking patches, and the Apple Watch, everyone in the fitness space seems to be wearing something that tracks their health, whether they’re monitoring their VO2 max and heart rate variability or just the basics like sleep and heart rate.

A lot of people in that space also think wearables help to improve health. Naturally, then, many have taken to promoting wearables as a tool for health improvement. But do wearables actually help? It’s intuitive that they might. For example:

Wearables can make invisible health markers visible so people can act on them.

Wearables can provide immediate feedback on health habits: do more steps, sleep more, eat a slice of pie, and you’ll get numbers back right away so you can know how to adjust your habits.

Many wearables come with reminders, nudges, and trackers that might help people to stick to their habits and form healthier ones.

Some wearables use gamification and include social and personal accountability mechanisms like badges, streaks, leaderboards, and workout sharing, possibly providing users with an external locus of control.

Wearables support N-of-1 learning, which could feel empowering and promote consistency.

Some wearables provide clinic-level monitoring and early detection for serious health issues like atrial fibrillation, sleep apnea, and so on, and users can treat them like a way to get early alerts that let them act sooner for better results.

Notice my qualifying words: “can”, “might”, “possibly”, “could”. All of this is well-and-good in theory, but theory only matters if it translates to the real world! Does it? No.

Just the Evidence

The biggest question for the most common types of wearables is Do they increase physical activity? We’re looking for biobehavioral feedback, priming, sunk costs—whatever you want to argue from—and we do see evidence for it. Basically every review suggests that there is a small-to-modest effect on physical activity.

Sometimes this effect is tenuous. For example, in Au et al.’s 2024 systematic review and meta-analysis on wearable activity trackers for children and adolescents, trim-and-fill rendered the effect size for daily steps nonsignificant (-0.01; 95% CI: -0.35-0.33). For measured “moderate-to-vigorous physical activity” (MVPA, a common outcome in this literature), the effect size held together after adjustment, but was small (-0.14) and marginally significant (p = 0.01). In Wu et al.’s 2023 systematic review and meta-analysis on wearable activity trackers for older adults, they were underpowered to investigate publication bias, but their trim-and-fill for MVPA nonetheless still halved their effect size from about 0.54 d (0.36-0.72) to about 0.25-0.26 d (~0.05-0.46), though it retained significance (marginally: p ≈ 0.02 after correction).

Effects in this literature start off not being very large, and they get meaningfully smaller if you’re able to account for publication bias. Some reviews have gone so far as to note that, the fewer the number of studies in the meta-analysis, the more positive the results seem to be, which is a clear signal of publication bias. Charted:

A lot of hype, from rarely-reported, relatively novel outcomes—something that would help a researcher stand out when it comes to getting cited!—is probably driven by selective publication, which tends to inflate effect sizes.

The effects in the literature get even smaller and more statistically dubious when you remember that the analysts are often medical doctors, and MDs are not generally all that good at stats.1

For example, here’s something any economist but few doctors would catch. In the Wu et al. review I cited, they reviewed three other outcomes: daily steps, total daily physical activity, and sedentary time. There looked to be evidence of publication bias for all of these outcomes, but there was too little power to tell. The thing the economist would’ve pointed out though, is that each result-level meta-analysis included multiple estimates per study, without clustering.

If a qualified economist had been on the team, they would have noticed the standard errors were unclustered and told the exclusively-medical team that their results were too precise because the results were dependent on one another—they basically ended up estimating the same things, or methodologically-dependent things multiple times, with no accounting for that fact! If their meta-analytic results had been estimated with clustering, the significance and effects would change, usually subtly, but sometimes dramatically.

For total daily physical activity, accounting for clustering means going from a significant 0.21 d to a nonsignificant 0.29 d ; for daily steps, the result goes from a highly significant 0.59 d to a still highly significant 0.57 d, but now there’s a significant trim-and-fill result that brings it down to a still-significant 0.48 d; for sedentary time, the result practically doesn’t change, but the trim-and-filled does make the result nonsignificant (d = -0.09, p = 0.07).2

These and more obvious, sometimes-important, sometimes-not statistical issues are the norm for wearable studies, and if you accounted for them consistently, you would find that the already modest effects wind up being considerably smaller than they appear. Throw in that many of the studies have bad designs—how do you even blind a wearable study?—, highly motivated volunteers, temporary novelty and participation effects, and issues with attrition and compliance, and reasonable people will conclude that the effects on these proxy outcomes need to be downweighted a lot.

And that last sentence is really important: these are proxy outcomes. No one should actually care about whether outcomes like physical activity and daily steps move up unless they elicit health changes—the real outcomes of interest! Fortunately for us, because these proxy outcomes are so widely accepted, researchers tend to treat them as primary, and they publish papers on the basis of significant results for said proxies, with less focus on actual health improvements. That makes inferences with respect to actual health improvements biased upwards to the extent they correlate with the proxy outcomes, but otherwise effectively unbiased. So, what do those show? Not a lot!

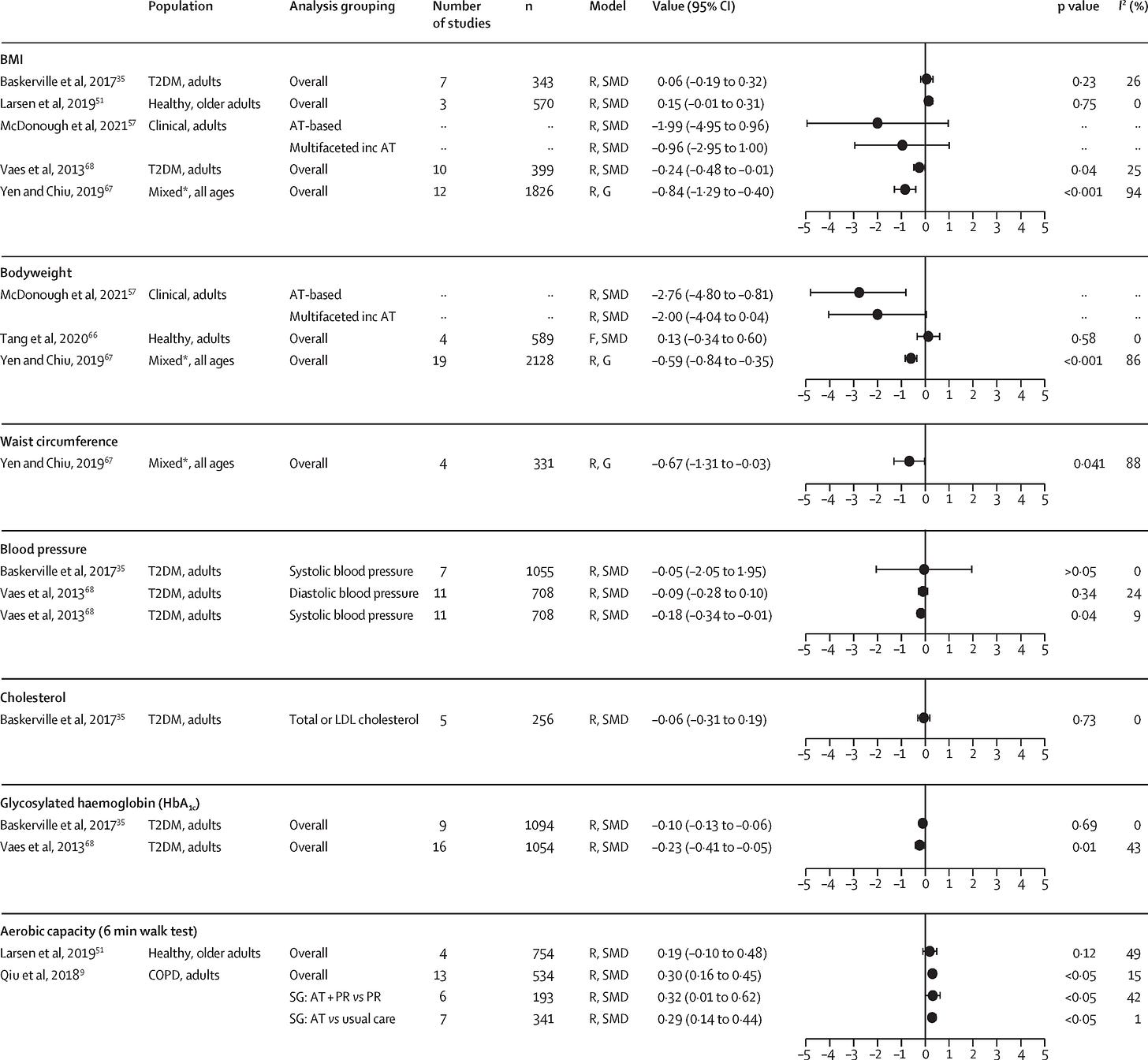

Consider this: in Ferguson et al.’s 2022 review, they found that meta-analyses—which are usually uncorrected for the issues noted earlier—generally do not show much in the way of physiological benefits, with smaller effects in healthier and more population-representative samples.

The evidence for psychosocial outcomes is even worse:

This comes up again and again: there seem to be effects on promoting healthy behaviors, but they don’t translate to effects on body composition, physical risk improvements, psychosocial improvements, etc., and where they do, the samples are usually at-risk, so generalizing to the general population is not advisable.

If we move away from activity monitoring towards CGMs, we see that, though diabetics obviously benefit and are protected by having them, normal people don’t get a whole lot of benefit. Think about this for just a moment and it becomes really obvious: What is a normal person even supposed to do with a CGM? Are they going to monitor their diet better? For what purpose? So they avoid glucose spikes that don’t tend to affect healthy people’s health much at all? It’s hard to even take CGMs seriously as something to recommend the general, non-diabetic population.

When we give nondiabetics CGMs, we see weak evidence for small improvements in postprandial and fasting glucose levels and variability, steps and walking time, weight, and a handful of other health proxy outcomes.3 There is virtually no evidence for changes to hard outcomes. And frankly, we shouldn’t expect anything, because there’s just not much in the way of health information for a normal person to glean from a CGM.

At best, I suspect non-diabetic CGM users will get what my friends and I have experienced: you learn what spikes your glucose, and you manage to cut down on alerts with some habit changes of dubious importance. The novelty decays into background noise; the alerts and reminders become less frequent and more obviously errant and ignorable; once you’ve learned that you sleep worse when you drink and less sleep makes you more tired, the wearables just become a source of cognitive load.4

Before wrapping up, I want to mention something else: many wearables are unreliable. Counting steps and heartbeats is easy, generally reliable, and not worth a whole lot. Sleep duration? Sleep staging? SpO₂? Atrial fibrillation? Don’t trust this stuff too much. Calories and energy expenditure? Stress? Cuffless blood pressure? Really don’t trust that.

One review found accurate step counts and heart rates with poorer reliability for energy expenditure estimates. A new study of the Apple Watch found results that were sometimes good, but for the more advanced stuff it was all over the place, with this fairly expensive device having only a moderately accurate step count and sleep tracking. Reliability is massively heterogeneous, both within and between devices and certain measurements need routine recalibration that users might forget to do.5

The best evidence in favor of mass wearable adoption is a chain of “can’s”: Wearables can surface hidden health signals; wearables can nudge behavior; wearables can sustain motivation.

The empirical record says that chain is not very strong; in fact, it’s usually broken.6

Behavioral effects are small and shrink further once you account for statistical and sampling biases; engagement and compliance fade as device novelty decays; and the endpoints that actually matter—weight, cardiovascular risk, mental health, HbA1c, and other hard outcomes—rarely move outside of high-risk, tightly-coached samples (and even their gains tend to attenuate with time). Add that most headline metrics are noisy or model-driven and you end up selling people devices that mostly add cognitive load and spiffy dashboards, only rarely resulting in people getting healthier.

Telling everyone to use wearables will generate a lot of data but not much health.

I love MDs, but I love them more when they let me review their papers before they publish them.

The result for MVPA is an increase from 0.54 d to 0.55 d. We saw before that trim-and-fill halved the unclustered effect. With clustering, it brings it down to a more respectable 0.42 d. The authors were in the rare situation where they actually would’ve been helped here!

For total daily physical activity, the trim-and-fill does nothing with clustering because no studies get filled.

And remember, this literature is still biased towards findings effects, so down-weight evidence accordingly.

There’s evidence that even diabetics stop wanting to wear CGMs, without concomitant health effects.

A word to wearables companies: remind people about this fact loudly and often!

As we should expect given the abysmal record of lifestyle advising and coaching. I tell people this is the Ozempic lesson: you will not actually motivate people to do things. Wearables won’t either. They work to remind people to be active for a short while, then the novelty fades and people go back to their habits, just as dietary changes tend to be ephemeral. Give people actual solutions like simple, once-weekly shots, and that will work; tell them to change how they live their lives, and you’ll only rarely see them sticking to it.

Good post! Send it to Cremieux. I llike posts on things nobody else writes on.